aiDAPTIV+ puts the power of enterprise-grade AI workloads into your hands affordably, privately, wherever you go, by extending GPU memory, enabling cost-effective on-premises AI and providing a complete ‘LLM training-in-a-box’ toolset for ease of use.

How it works

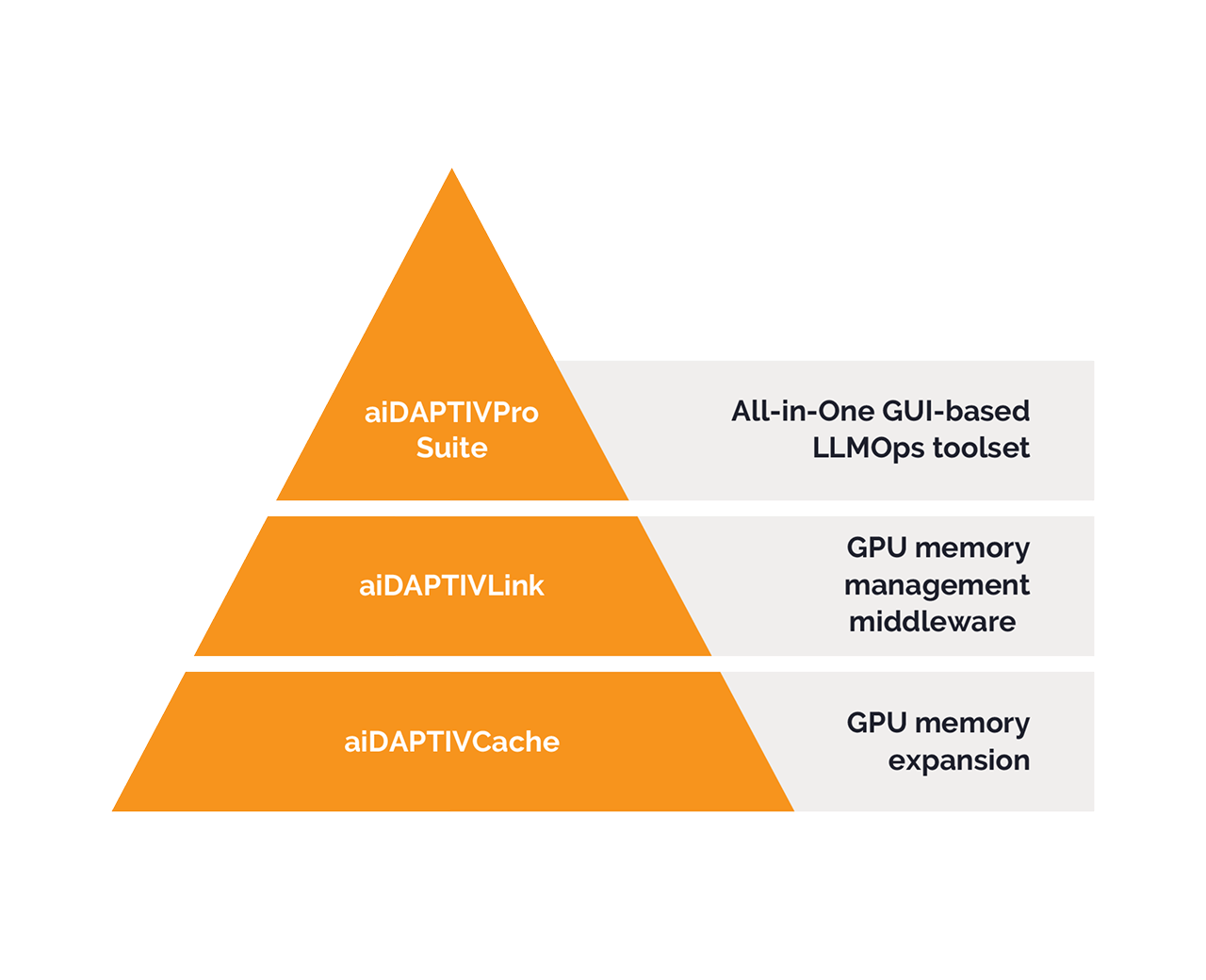

aiDAPTIV+ combines optimized memory management middleware, flash-boosted memory, either CLI or an optional GUI-based all-in-one AI toolset and seamless GPU integration to deliver fast, secure AI training at scale.

The aiDAPTIV+ middleware layer provides PyTorch, CUDA and NeMo compliance without modifying your models. Combined with aiDAPTIVCache SSDs, it delivers up to 8TB of extended memory with low latency and extreme endurance.

Why your business needs aiDAPTIV+

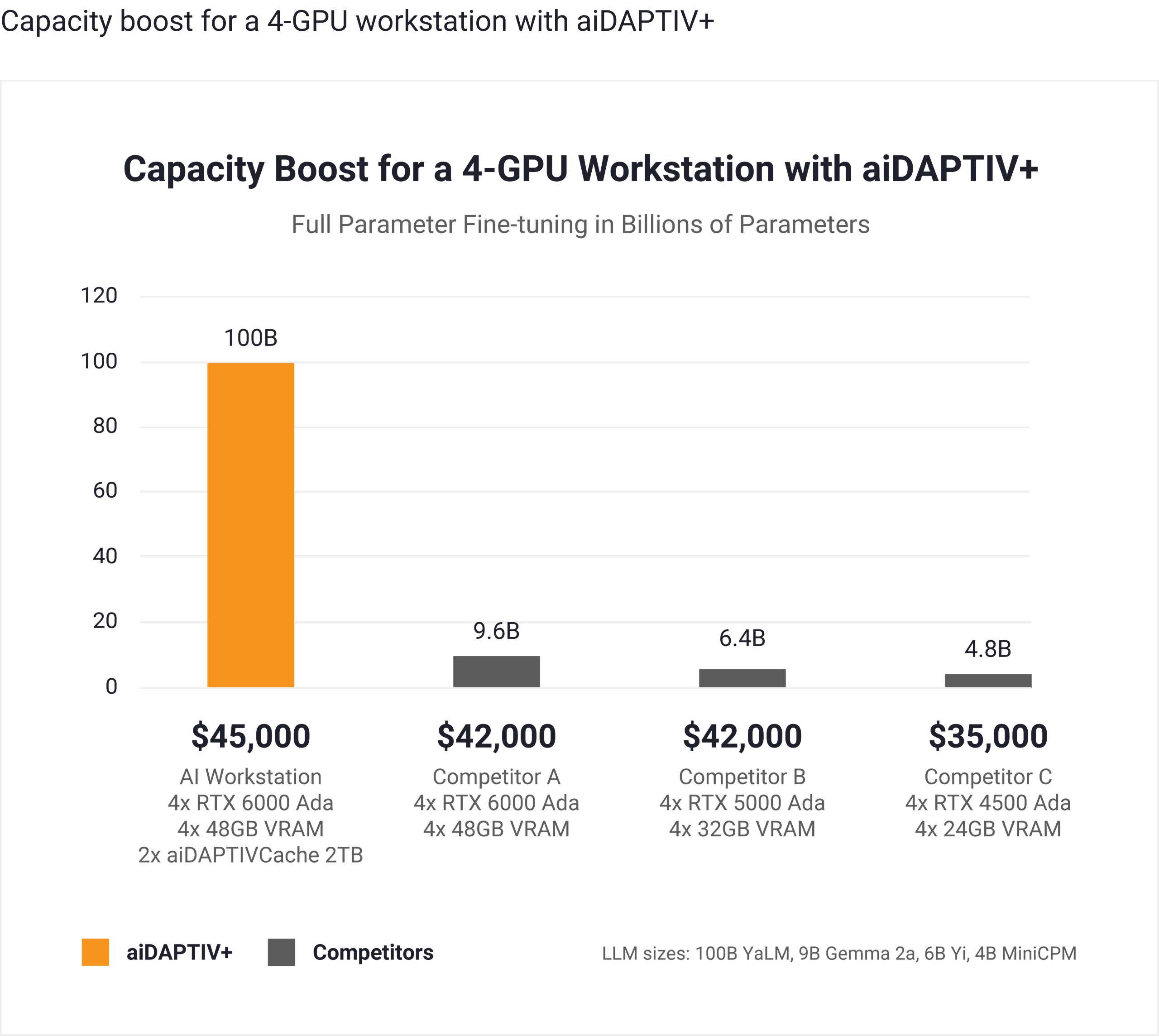

Custom training AI with aiDAPTIV+ removes the complexity, cost, and privacy barriers so that you can unlock real value from your data within hours, not weeks. Achieve breakthrough added AI model capacity using local, small-scale infrastructure.

aiDAPTIV+

Cloud

GPU-card data center builds

Enterprise-class power

Simple plug-and-play

Cost-effective

Data privacy

Simple scaling

Accelerated inference

aiDAPTIV+

Cloud

GPU-card data center builds

Improved inference

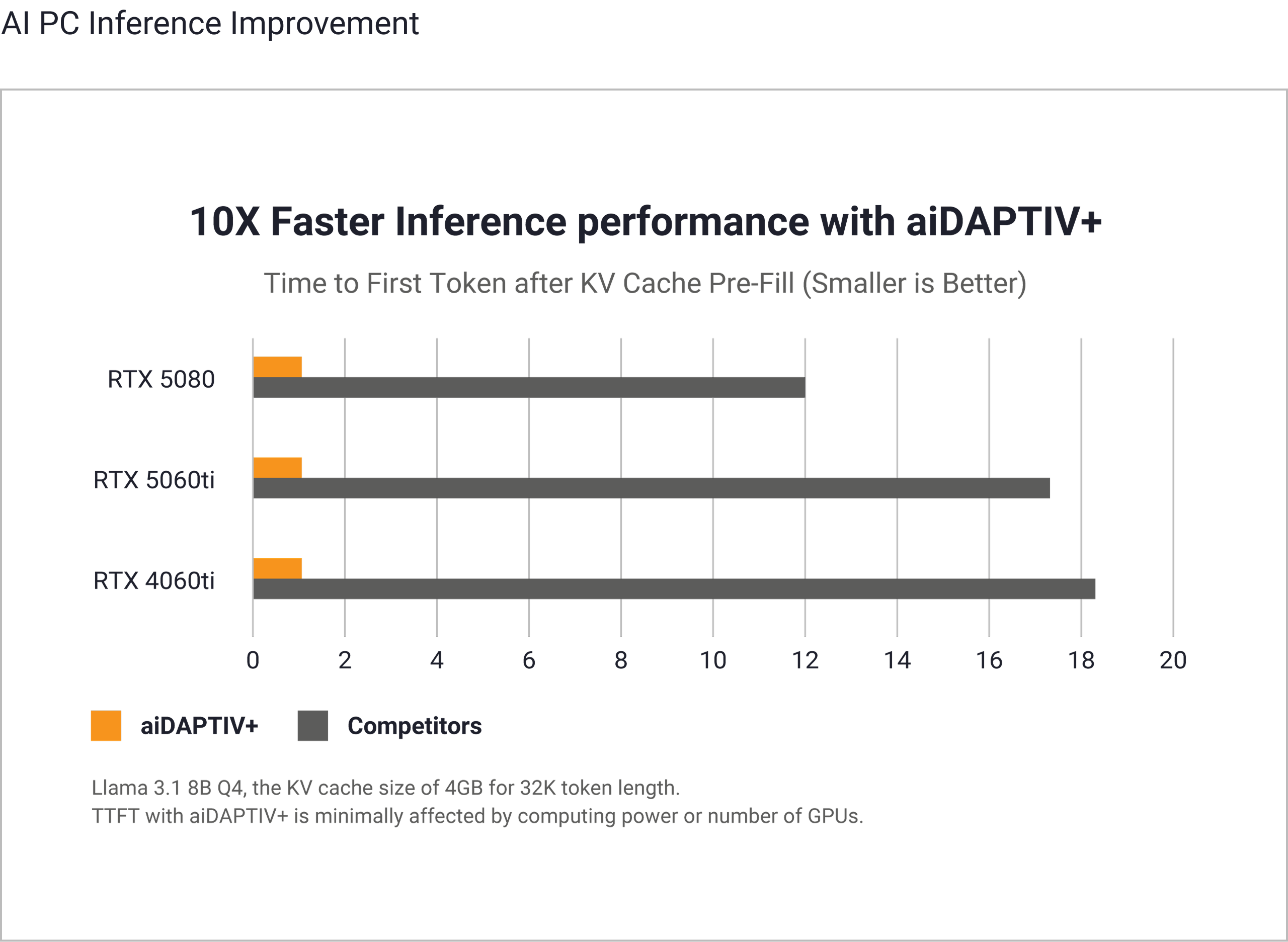

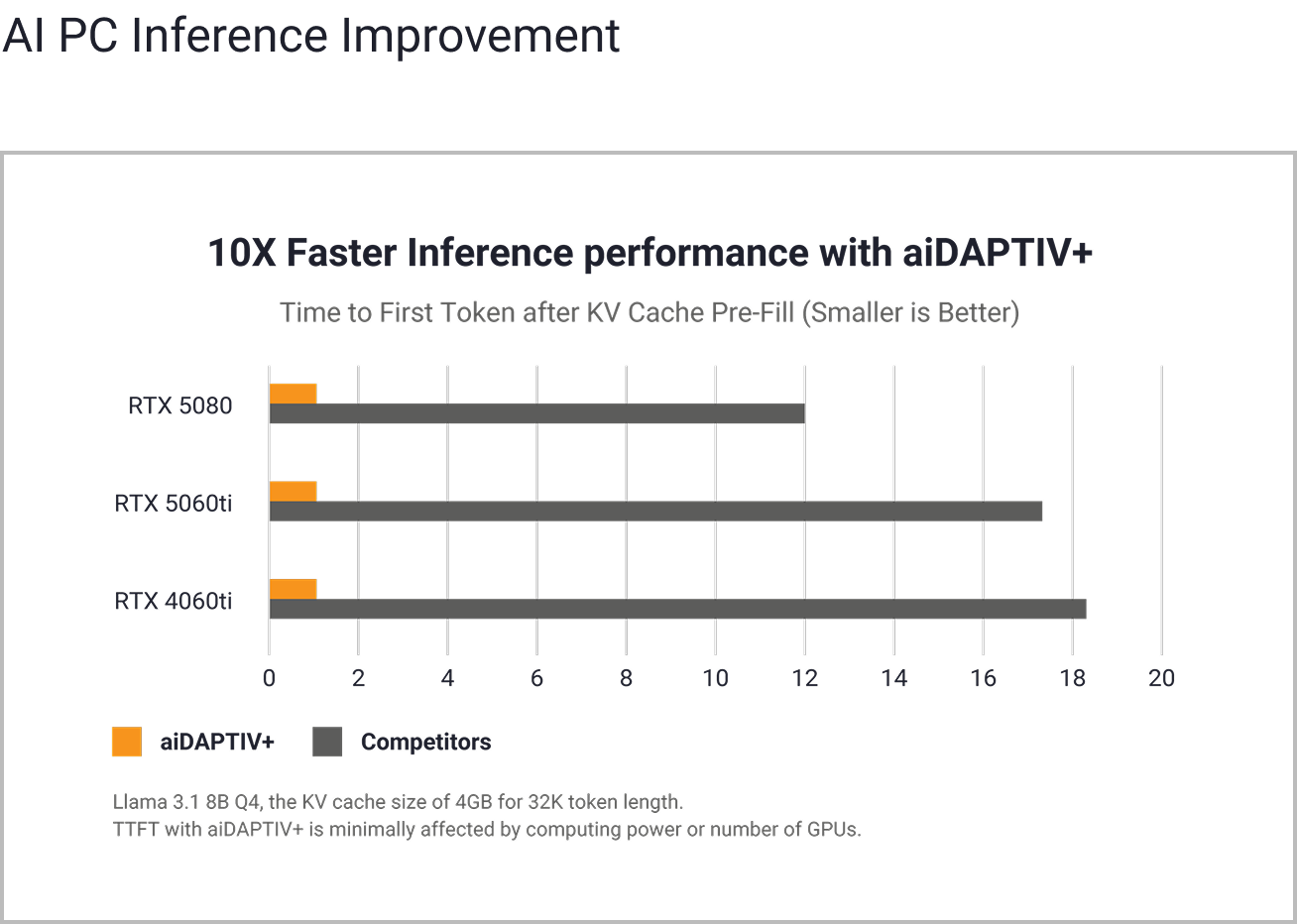

Using flash-accelerated memory and middleware optimization, aiDAPTIV+ dramatically reduces Time-To-First-Token (TTFT) across GPU configurations. Even under heavy load, aiDAPTIV+ consistently delivers responses up to 10X faster by eliminating the need to recalculate evicted tokens, making your AI feel faster, more fluid, and more accurate.

System Configurations Tested:

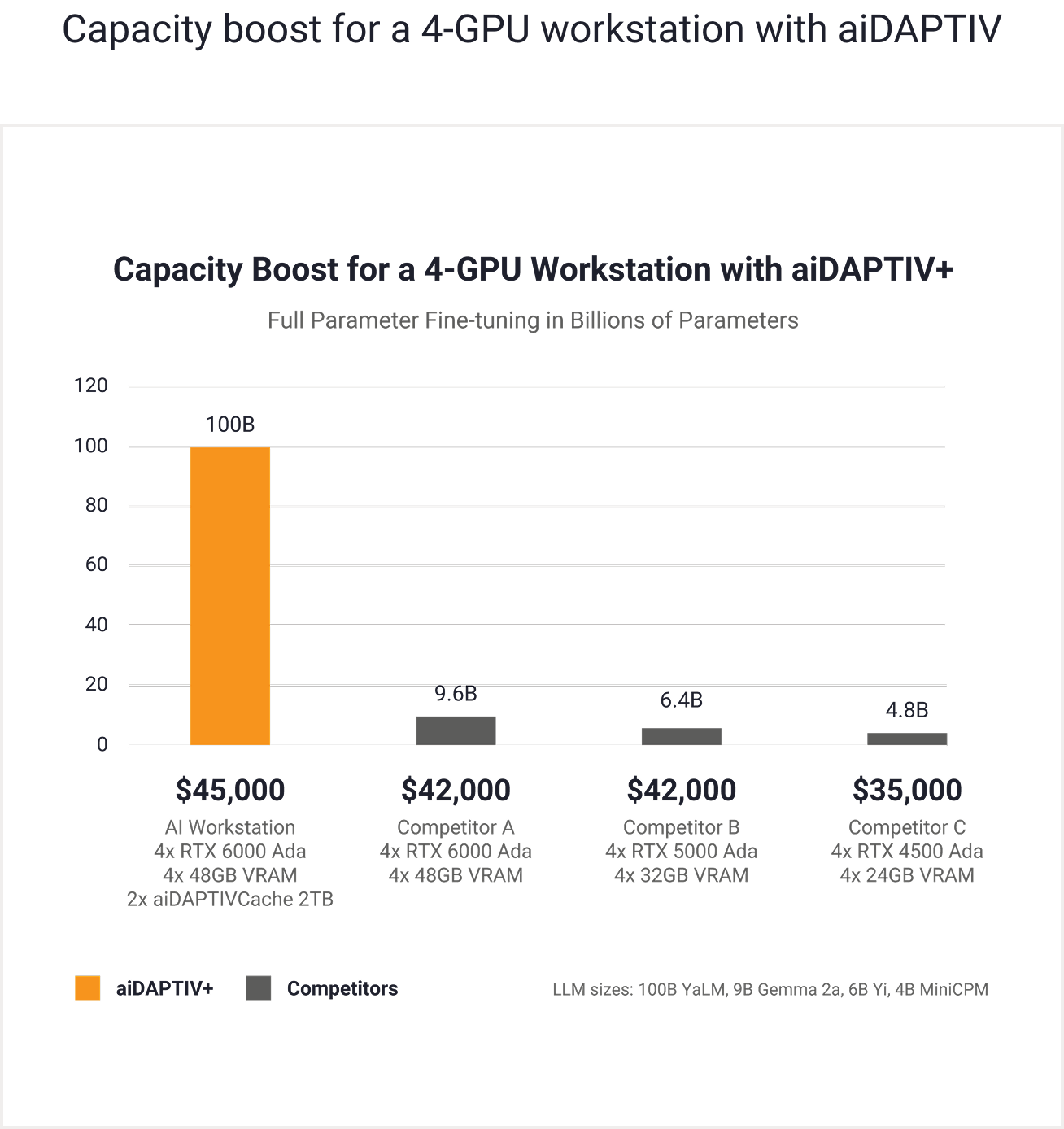

Fine-tuning at scale

Resources for setup and support

Whether you’re integrating with a laptop PC, desktop PC or engineering workstation or deploying at scale, our how-to guides and documentation make setup quick and simple.

Step-by-step instructions to help you set up and start training models quickly.

Install and configure aiDAPTIVPro Suite with ease.

Learn how to use every feature for end-to-end model training and inferencing.

Ways to buy

aiDAPTIV+ makes AI processing possible on a range of small-scale devices by extending the memory needed by the GPU, enabling the use of cost-effective hardware with just the needed number of GPU cards in place of expensive AI servers or GPU cloud services.

aiDAPTIV+ is available in multiple personal computer and workstation form factors, and is ready to go out-of-the-box.

Where to buy

Available now via your trusted sources, including Newegg and MAINGEAR. Choose the aiDAPTIV+ setup that fits your team—and start training AI today.

Have a question about how aiDAPTIV+ works in your environment? Need help selecting the right solution or understanding performance expectations?

We’re here to help—from technical queries to purchasing decisions. Fill out the form and a member of the aiDAPTIV+ team will get back to you promptly.